The Modern Data Analytics Stack for AI-Driven Decision Intelligence

From Big Data to Big Questions (aka More Data, More Problems)

The data analytics industry has evolved significantly over the past decades. Long-time veterans will recall the first business intelligence offerings built for classical data warehouses from the likes of Oracle and IBM. ETL workflows reigned supreme as users struggled to get their limited data sets into standard formats for years.

What soon followed was an explosion of data from new types of applications and sources, in large part to the supremacy of mobile substrates. Cloud emerged as the de facto standard for computing, and it came with seemingly unlimited amounts of storage. Flexibility and scalability in processing became key. The 2010’s saw the rise of semi-structured data such as json and NoSQL databases such as MongoDB and Cassandra, to name a couple. MapReduce became all the rage as companies attempted to process a super fast-growing amount of data by way of ever-present hadoop clusters. On the front end, the rise of visualization tools made it easier to create charts and dashboards. And when all else failed, data science, the hottest topic of the day, became the go-to option for businesses to figure out what was going on with their data, although they saw more struggle than success.

Big Data, they said, was here.

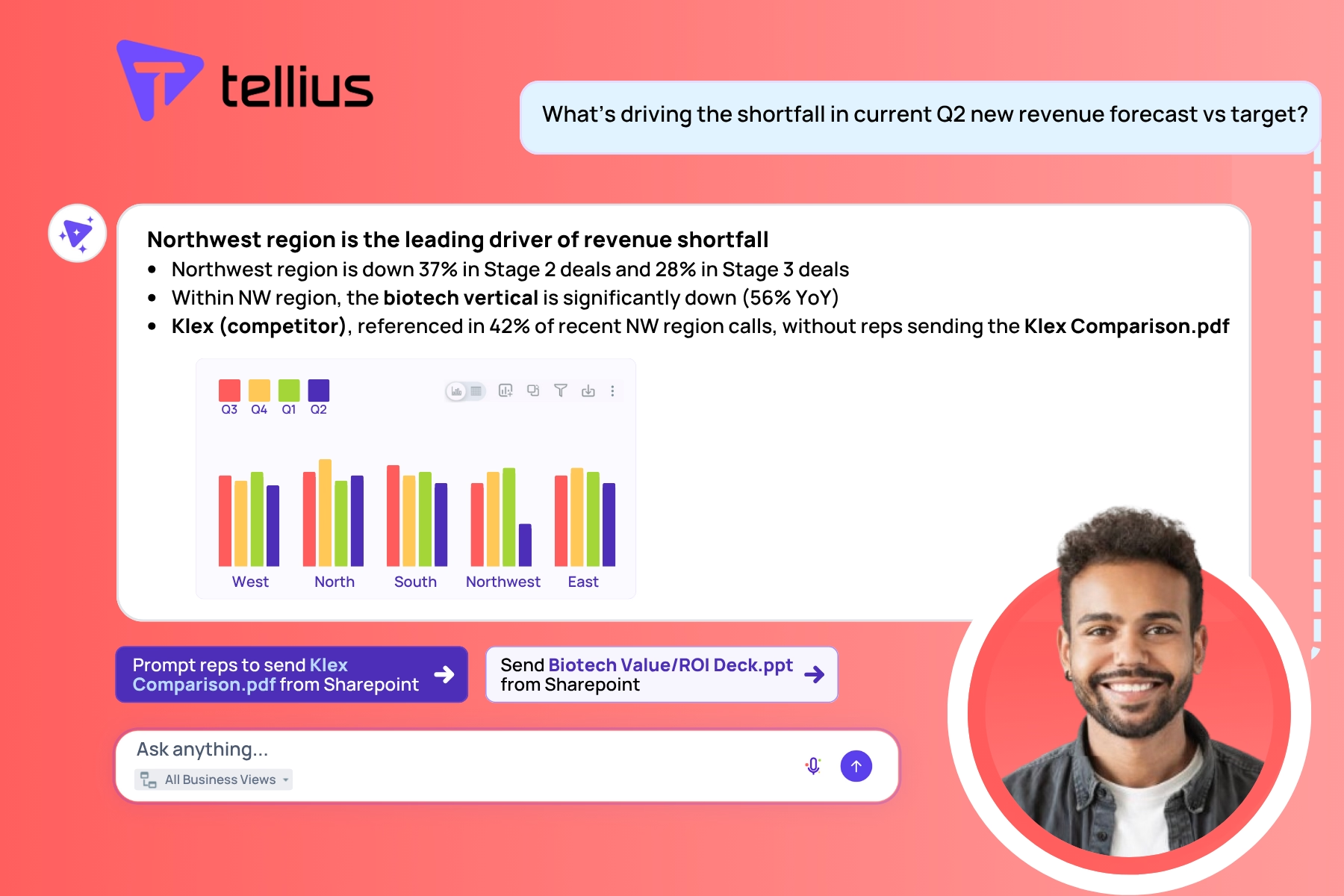

As were Big Problems. Business problems such as: what don’t I know about my business that these big data processes aren’t telling me? As a manager or business leader, I can more quickly see now what happened last quarter, month, week or yesterday. But why did it happen? What are the drivers of change or market forces that I need to be aware of so that I can make the right decisions today to better impact my bottom line tomorrow?

These were the Big Questions that remained. Together, these constituted the need for the next generation of data analytics architectures.

Figure 1: The Previous Generation Data Tech StackThis article is about the modern data analytics stack we see becoming popular, and how Tellius helps organizations evolve without succumbing to the costly mistakes of the past.

The Modern Data Analytics Stack – An Overview of Common Components

First, let’s better understand the components of modern data analytics architectures and their functions. This will allow us to bring it all together to understand how different offerings make use of these components to solve the big business problems of today and those on the horizon for tomorrow.

Image: Components of Modern Data Analytics Architectures

It All Starts With Your Sources of Data

The modern architecture is built with modern data sources in mind. Although traditional OLTP database systems and traditional data warehouses continue to exist, the modern flavor of these components offer pay-as-you-go models, built-in compression, and all of the bells and whistles we’ve come to expect from enterprise offerings, including security, concurrency, and, most of all, performance.

For example, Snowflake allows users to get up and running quickly with their cloud–based offering, and payment is based on actual usage, not complicated licensing dependent on laborious negotiations.

Data Prep and Transformations

The connection between high-powered data processing and your data sources is the ability to quickly and easily transform and ingest this data into the target system according to your business use case.

Because of the variety of business problems that exist to be tackled, there is no one-size-fits all approach to transforming data. Instead, the key is in flexibility and ease of implementation of any given set of transformations, or pipeline of transformations, to allow for more advanced system computation.

Luckily, the data warehousing industry has caught on to this, and vendors have unleashed an unprecedented set of capabilities that have proven to be game-changers. Consider Snowflake, with its cloud-based offering that offers the separation of compute and storage, and with that the ability to dynamically scale according to each purpose. This means that instead of the old ETL model, the more recent ELT pattern, in which the transformations can be applied on the target system itself, becomes faster, easier and more cost effective.

Approaches to Getting Value from Your Data

So you’ve got your data, and you’ve transformed it into something ready to be processed. What to do?

The approach you take to getting value from your data largely depends on which end of the business analyst-to-data scientist spectrum you find yourself.

Data analysts use BI and visualization tools to explore and analyze data. Unfortunately the process by which this happens has many manual steps to this: you create a hypothesis and try slicing and dicing to try to spot the key insight. The drawback here is obvious: how can someone find the right insight with so much data and so many possible combinations to test?

Data Science and Machine Learning

On the other end of the spectrum are those with the know-how to perform data science.

A common approach in recent years has been to apply machine learning algorithms to predict, classify, or group and cluster the data points. This means utilizing common algorithmic methods such as linear regression or generalized linear modeling, for example, for continuous target features, or logistic regressors, random forests, or decision trees for categorical or dimensional data labeling.

Platforms exist that will allow you to ingest data and train a model or models, and may even allow for hyperparameter tuning. Alternatively, data science libraries and developer-friendly packages exist that allow for this entire process to be written by data science experts end-to-end.

You might ask yourself “Wait…what if I didn’t understand all of that?”

That’s OK. And that’s part of the point.

Although much of data science and machine learning for business has been en vogue for at least half a decade, the truth is most businesses are no closer to leveraging these data science expert resources to quickly and easily find the answers they are looking for. The vast majority require heavy investment on both infrastructure and time to achieve the desired results for a target business problem — assuming that the data science resources exist on staff to begin with. This is true even when presented with a user interface to tune the required parameters.

Part of the problem is that you don’t run machine learning directly on your data warehouse – you need to stand up a Spark environment, for example, and move your data before the real work can even begin.

Nonetheless, a modern data analytics architecture is not complete without the ability to train, evaluate and deploy ML models.

End-User Consumption

The truly modern data analytics platform goes beyond raw horsepower in terms of compute power and the mere ability to create machine learning models. It does so by leveraging these sophisticated algorithms to produce a new user experience that makes consuming, accessing and ultimately generating answers to those Big Questions easier than was ever possible before.

This user consumption layer of the stack can come in a variety of forms based on the business needs. On one end is a familiar interface: the Visual Dashboard, but with much improved usability so that even lay users can find the key facts about their business. Think of PowerBI, or Looker – applications that allow users to create a variety of views into their data. Beware: even this approach can require significant time to perform the initial data modeling.

But what if the business need is more ad-hoc? In that case the ability to search becomes essential, and there is no easier way to do so than by asking questions using natural language, such as “How is revenue growing weekly for Canada?” or “What is the average weekly cost in Germany compared to Brazil?” In this way, a business user has the right answer at their fingertips, without having to worry about creating the perfect dashboard beforehand.

Finally, what if the user doesn’t know exactly what to ask, or how to know what is interesting? In these scenarios, it is important to have the ability to detect anomalies – points of interest that a user wouldn’t think to ask, or capture in a typical dashboard view. Moreover, the user shouldn’t have to explicitly ask anything at all. Instead, a one-time set-up whereby the user tells the system what sorts of information is important to them should be enough for the system to proactively surface these anomalies and insights. For instance, a user may be interested in changes in revenue, costs or sales order volume by region, department or brand. The user could get notified about what these changes are, and why they’re happening, either periodically or in an event-based fashion.

Tellius, Decision Intelligence, & The Blueprint for the Modern Data Analytics Stack

Most businesses lack the resources or expertise to be able to successfully incorporate all of the different pieces of this modern stack into their own architecture. Furthermore, it can be hard to decide which vendor cocktail will prove to be the winning solution for your business needs. Where to start?

Enter Tellius.

What if we told you that there exists a platform that is capable not only of checking the box on all of the above, but that this same platform hits each capability out of the park by leveraging next-generation state-of-the-art architecture?

What if we told you that business users with no requirement on data-processing sophistication could connect to your cloud-based data warehouse, deploy on cloud infrastructure quickly, easily, and securely, transform your data, and both generate ML-based insights and perform ad-hoc search queries, all while saving your favorite bits of each to your own personalized dashboard, all within one platform?

Sound crazy? You’d be crazy not to take advantage of it.

That is exactly what Tellius offers. Tellius bridges the gap between the data science and business user ends of the spectrum to empower all business users to take advantage of the latest innovations in computation with just a few clicks. No longer do business users have to wait on data analysts or data science teams to get answers fast.

In fact, Tellius goes one step further in almost every piece of the puzzle to deliver true Decision Intelligence.

Not only are anomaly detection and ad-hoc searches possible using natural language, but users can generate advanced insights to understand what’s driving segmentation, trends over time, and cohort behaviors, all while preserving the ability to create custom ML models for users with that level of expertise.

When it comes to leveraging data sources, most vendors in the space stop at integrations with cloud-based data warehouses like Snowflake. But Tellius innovates where few others would dare, such as by being able to connect and leverage direct integrations with modern BI tools like Looker, for instance, effectively translating their data and object model into something that Tellius not only understands, but can unleash.

No longer is there a need to laboriously explore different combinations of data or slice and dice until some nugget of an insight becomes evident. Instead, Tellius automatically computes multivariate relationships between all combinations of the features in your data to tell you what’s most interesting, what’s changed, and why.

Elasticity & Shared Infrastructure: The Tellius Unified Runtime & Elastic Architecture

At this point, you might think that Tellius has innovated in a lot of different areas including natural language search and AI-based insights. That is true, but not quite the whole story.

At Tellius, we like to ask, can we do more? What is the next step? Clearly, it is not only to provide multiple different capabilities to the modern business user, but to offer these in a way that makes operating between these different modes of data discovery seamless and scalable.

Enter Tellius’s Unified Runtime & Elastic Architecture.

Tellius’s architecture takes all of these different pieces and allows them to work together from an infrastructure perspective to optimize the user experience even further.

Consider a common use case: you run an ad hoc natural language search on your data, and from there an “aha” moment drives you to obtain further insights using AI techniques. This would take any other system two separate sets of infrastructures, requiring loading and reloading of data in order to achieve this.

But not Tellius.

We’ve considered the primary business user from a user experience perspective and translated this into a one-of-a-kind architecture that makes not only the sharing of resources possible, but goes on step further in being able to leverage dynamic scaling and re-use of elastic compute behind-the-scenes so that a business user can go back and forth between ad hoc searches and AI-based insights as much as they please. Leave the hard part to Tellius.

The above image is taken from our November webinar on the modern data stack, an excerpt of which can be found here.

For this reason, Tellius has been able to support a number of large businesses with a diverse set of business needs and use cases. What’s more, is that business’s needs tend to change, and as they do, the platform’s capabilities allow them to easily shift gears.

Figure 3: How Tellius Fits into the Modern Data Landscape

What’s Next

Tellius is live and currently supports a scalable pay-as-you-go model that leverages an elastic architecture. This lets you get started today with one business goal in mind, and expand to other business needs over time without having to worry about sunk costs or expensive upfront set-ups. Start somewhere, but feel confident that from here, you can go anywhere.

Get release updates delivered straight to your inbox.

No spam—we hate it as much as you do!

Diagnostic Analytics: Using AI to Get to the 'Why' Behind What Happened

Here are the key forms of diagnostic analytics, the limitations of traditional manual approaches, and the benefits of AI-powered diagnostic analytics.

RevOps Intelligence Redefined: AI-Powered Agents Meet Unified Knowledge Layer

Learn how AI-powered variance analysis is transforming how organizations uncover and act upon financial insights.

How CPG Category Management Teams Get Faster Value from Syndicated Data with Self-Service Analytics

Data-driven decision-making is vital to CPG companies as consumer preferences and buying patterns change. Learn how self-service analytics can help.