PMSA 2026 New Orleans: Five Shifts Pharma Analytics Is Making in 2026

.png)

The PMSA Annual Conference in New Orleans this week was a whirlwind! the official theme was Convergence of Data, Talent & AI. In the hallways, in the coffee line (or tea, in my case), in front of the 35 posters, and during the second-night magic act, the conversation had already moved past convergence. Nobody was debating whether to adopt AI. They were asking why their second pilot had stalled, why field teams weren't trusting the answers, and what their architecture had to look like to support the four production deployments someone now owed leadership by Q1 2027.

PMSA has its own texture — collegial and scientific in a way I don't always associate with pharma commercial conferences. It felt closer to a working research meeting. Poster authors were delighted to walk you through their methodology. Wonky, in the best way.

What follows is my attempt at mattered for pharma analytics teams heading into the back half of 2026, drawn from the keynotes and breakouts I caught, the posters I lingered at, and the conversations that ran past their natural endpoint. I missed sessions; everyone does. The five shifts below are the ones that came up often enough that I'd bet on them showing up in your team's planning by next quarter.

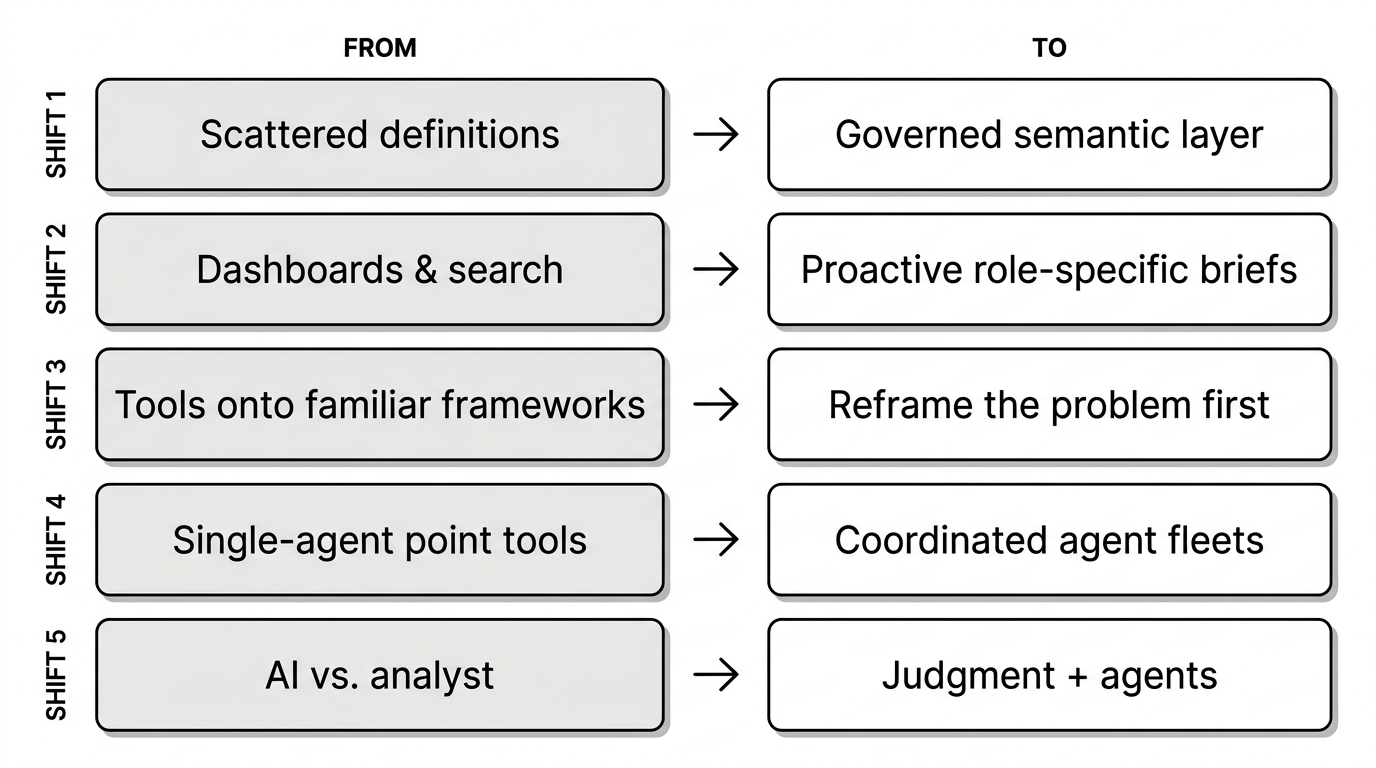

Five shifts pharma analytics is making in 2026

Shift 1: The semantic layer is the new table stakes

From definitions scattered across dashboards to one governed layer behind every AI answer

Three years ago this was a back-office data engineering concern. At PMSA 2026 it was the most-mentioned architectural element of the week. The semantic layer anchored the Day 2 keynote reference architecture as the labeled block between the data ecosystem and the agent stack. It surfaced in an AstraZeneca Medical Affairs platform exposing six persona-specific experience layers on a single definition foundation. It came up in posters from Eisai and Datazymes as a control plane or bridge layer. And it sat underneath a Wednesday session where a Novo Nordisk team described cutting field analytics latency from roughly ten days to two by anchoring the work on one governed definition layer.

The complication came up in nearly every booth conversation I had: definitions are fragmented across systems and stewards. "Monthly revenue," "active prescriber," "engaged HCP" — each one means something different in three different dashboards, and AI projects sitting on top of that ambiguity produce answers nobody on the brand team will sign their name to. Novartis's Chief Insights and Decision Science Officer named it directly in the Day 1 keynote: teams are adding new tools on top of infrastructure they haven't actually examined. If you haven’t figured out your semantic layer, everything else is shakey.

Shift 2: Push, not pull

From dashboards and conversational search to role-specific intelligence, delivered

The shift from a pull model (dashboards, then conversational search) to a push model is now the working direction for the teams furthest along. A BMS Director of AI laid out a gross-to-net early-warning system that surfaces channel variance before quarter-close. A Novartis Executive Director of Forecasting argued the same case from the demand side: subnational forecasting can't be a static target-setting exercise when local demand shifts, payer changes, and field execution variance now move faster than quarterly cycles can absorb them. A Bayer Executive Director of Commercial Data & Analytics walked the floor through a multi-year progression from AI as role-specific interns to experts to push-based intelligence delivered proactively to AVPs, AGMs, and the home office.

Each generation reduces the distance to action. A dashboard requires you to know what to look for. A search interface requires you to ask the question. A push system surfaces what changed and why before either of those steps has happened.

The catch — and this came up unprompted in three separate booth conversations — is that push only works when the substrate is already there: governance, role-aware permissions, observability, and (back to Shift 1) a real semantic layer. Most orgs trying to adopt push are still on dashboards-and-search and haven't funded the substrate. Without it, push isn't intelligence. It's noise.

Shift 3: Mindset transformation beats tool deployment

From deploying tools onto familiar frameworks to reframing the problem entirely

The two keynotes converged on this one. The shift they were naming is internal: from reaching for the familiar framework to reframing the problem first. Novartis's Chief Insights and Decision Science Officer led with aspire to be the wisest person who reframes the problem and spent a decent chunk of the discussion encouraging practicioners to hold their assumptions lightly — refusing to default to familiar frameworks when the problem has changed underneath. Merck's SVP of Digital Human Health offered the analogy that in 2005, people genuinely argued that the BlackBerry keypad was better than the touchscreen. The point wasn't that BlackBerry was wrong. It's that organizations rationalize the obsolete with sophisticated arguments right up until the moment the argument collapses. He challenged attendees to find their own teams' BlackBerry keypads.

Both keynotes also referenced the talent gap. Pharma is investing heavily in technology and data infrastructure, but the people capable of extracting value from those investments aren't keeping pace. Multiple breakouts surfaced this and nobody had a clean answer. The Tuesday panel with Boehringer Ingelheim, Genentech, and Bayer addressed it directly under the framing question of whether agentic AI replaces analytics, and the consensus was that the analyst role evolves rather than disappears — value migrates toward interpretation and oversight rather than the mechanics of data processing. The technology gets cheaper every year. The talent does not, and orgs that don't help their teams make that shift are going to lose them.

Shift 4: Agents work in fleets, not solo

From single-agent point tools to an ecosystem of reusuable agent fleets

The Day 2 keynote reference architecture was the cleanest illustration: a stack of foundational agents (SQL, RAG, search, visualization, evaluation) sitting underneath applied agents (financial performance, NBA/NBE, sentiment, patient journey, pre/post-call), sitting underneath domain agents (marketing, omnichannel, medical), sitting underneath persona-specific planner agents. Bayer's panel described the same pattern from the build side as an "AI Factory" — a reusable ecosystem spanning knowledge layers, agent orchestration, and AgentOps. A Merck Director of Commercial Spend Optimization described his team's orchestrator as like the human brain: interpreting intent, deciding which modules to call, and explicitly choosing federated mini-models over a single monolithic foundation model.

What I kept hearing in side conversations: most pharma orgs are still buying single-agent point tools, deploying them on the team that bought them, and discovering that what works on one team doesn't lift cleanly into another. The unit of deployment is shifting from the model to the system of agents. That changes how teams budget, architect, and hire. A model is a procurement decision. A fleet is an operating model — and most orgs aren't yet structured to run one.

Shift 5: Human-in-the-loop is being repositioned, not removed

From “AI vs. analyst” mentality to a partnership (analysts=judgment, agents=repeatable)

The Tuesday panel I keep coming back to addressed this head-on: agents handle the repeatable, humans handle judgment, relationships, and strategy. Lilly's VP of Business Insights and Analytics, on a separate Day 1 panel, framed the talent profile that wins as the bridge between science, data, and business rather than deeper technical depth in any one of them. A Daiichi Sankyo Oncology Patient Journey Analytics lead made the same point in measurement language: machine-driven attribution plus human-guided interpretation is the mechanism that turns raw model output into business impact.

What's still unresolved is the regulatory and compliance ceiling, which is real. Multi-variable business reasoning still has to be owned by a human who can be accountable for the conclusion. AI confidence is not AI accuracy, and accuracy is not adoption. The pharma orgs moving fastest have stopped trying to engineer their way to trust through model performance and started engineering for explainability, governance, and integration into the existing decision cadence.

What's actually working

A handful of sessions stood out for showing AI deployments that have moved past pilot scale into commercial impact. Surface-level mentions only — the methodologies are worth a separate post.

BMS — gross-to-net forecasting that surfaces variance early

A BMS Director of AI, Policy & Channel Business Insights & Technology presented an AI-enabled gross-to-net forecasting system targeting a 20–50% reduction in forecast error. The architecture is event-aware: contract changes, legislative effective dates, and product launches go in as inputs rather than getting discovered after the fact. The governance pattern that matters here: BMS runs an internal AI Accelerate program supplying dedicated business owners and structured governance to each project, paired with a poster from the same team extending the thesis into media-mix modeling. A consistent shape across the BMS material — AI operationalized in finance-adjacent commercial decisions, with the governance scaffolding visible throughout.

Daiichi Sankyo — HCP-tactic-level multi-touch attribution

A Daiichi Sankyo Oncology Patient Journey Analytics lead presented a multi-touch attribution methodology that works at the HCP-tactic level rather than the channel level. The argument: conventional pharma omnichannel measurement tracks channels in silos and plans on reach and frequency rather than proven conversion impact. The more useful deliverable, in my view, was a six-question MTA Readiness Survey that quietly tells most orgs they need to fix their HCP master data and analytics-to-brand cadence before they hire someone to build the model. The model is the easier half.

Merck — orchestrator-as-brain for commercial spend

A Merck Director of Commercial Spend Optimization presented an always-on agentic workflow built around an orchestrator he described as like the human brain: interpreting intent, deciding which modules to call, and coordinating modular components underneath. The team chose federated mini-models over a single monolithic foundation model. The grounding example was a $5M social-media-spend scenario with AI determining placement and allocation against defined rules. Per the speaker, the harder work isn't the model. It's leadership sponsorship and the operating discipline to bring stakeholders along.

Pfizer + Sanofi — agents scoped narrowly, embedded in workflow

A Pfizer Director of Forecasting CoE presented an agentic forecasting product embedded inside the team's existing epi-based methodology rather than replacing it. A Sanofi Director of U.S. Value and Access presented a Digital Twin of the COPD ecosystem to inform targeting, launch sequencing, and access planning. Both share a pattern worth naming: the agents are scoped narrowly and embedded inside existing workflows rather than deployed as horizontal AI assistants. The unit of value isn't the tool. It's the workflow it changes.

Two more worth flagging in passing. A Bayer Executive Director of Commercial Data & Analytics Solutions walked through a multi-year progression from AI as role-specific interns to experts to push-based intelligence — the cleanest articulation of the pull-to-push trajectory I heard all week. A Novo Nordisk Director of Business Applications described an operating change that took field analytics latency from roughly ten days to roughly two, anchored on a governed semantic layer.

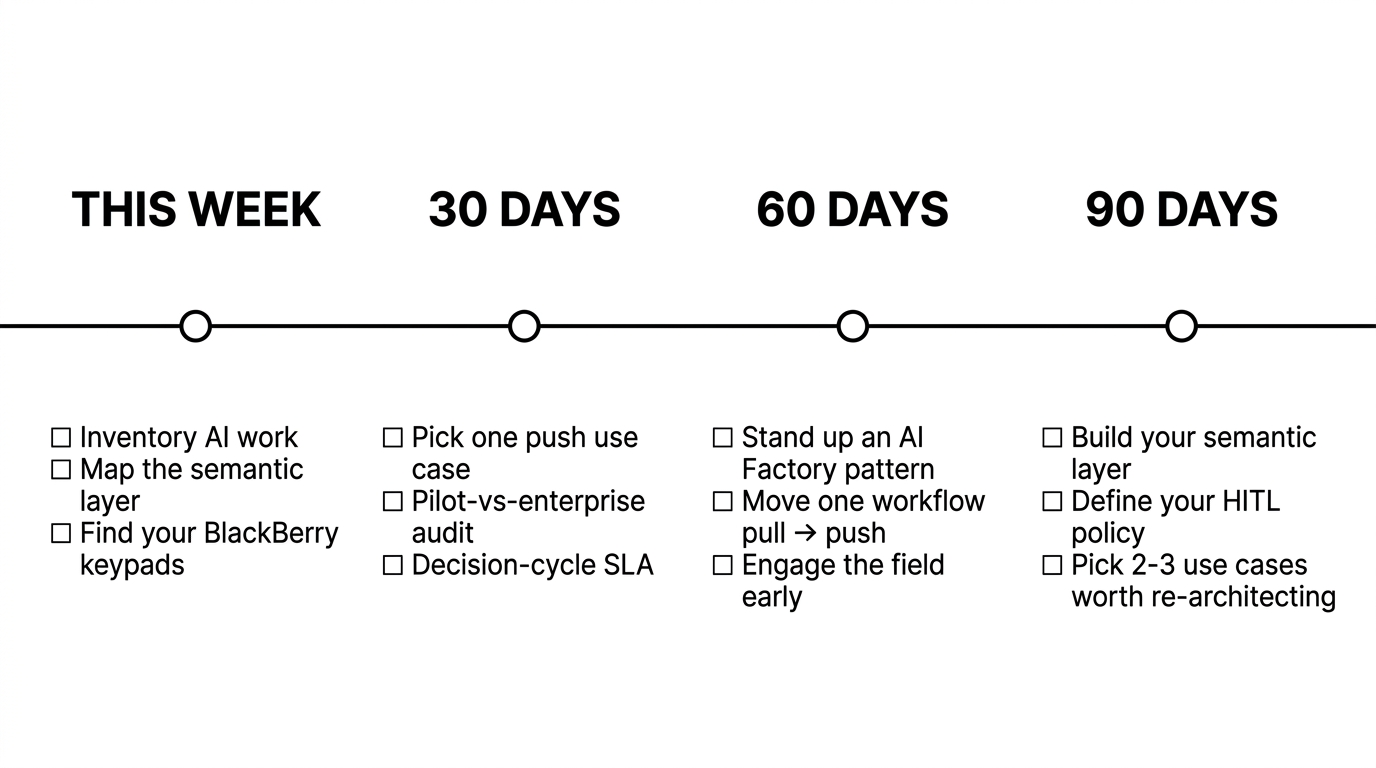

What teams can do now

PMSA speakers didn't just describe problems. They offered specific actions. Here's a curated set, organized by what you could do this week and at 30, 60, and 90 days out.

This week

- Inventory your AI initiatives. Count them across analytics, commercial, medical, market access, and IT. If the number is more than five, the coordination problem is bigger than the model problem.

- Map your semantic layer (or its absence). If "monthly revenue" or "active prescriber" means something different in three different dashboards, that gap sits in front of every AI investment your org makes next year.

- Find your BlackBerry keypads. What process is your team still defending because it's familiar (like keyboards once were on phones), even though the evidence points the other way?

30 days

- Pick one push use case. Not a chatbot. Not a search interface. One role-specific brief that proactively tells someone what changed and why. Pick one persona: AVP, AGM, brand director, KAM.

- Run a pilot-vs-enterprise audit. Classify every AI initiative your team owns as pilot or enterprise grade. Production criteria are governance, observability, persistent context, and integration with the system of record. If the same answer can come from two different paths, it's still a pilot.

- Write down your decision-cycle SLA. Pharma question → analyst answer → decision-maker action. Measure it. If it's longer than seven days for routine commercial questions, that's the actual gap.

60 days

- Stand up a small "AI Factory" pattern. Even at modest scale: a shared knowledge layer, reusable foundational agents, a governance frame, AgentOps. The Bayer structure works at the team level, not just enterprise.

- Move one workflow from pull to push. Quarterly business review prep is the easiest first target. It's the work everyone wants automated and nobody enjoys.

- Get the field involved early. Multiple sessions surfaced field skepticism as the number-one adoption blocker. The "AI ambassador" pattern — early adopters within the field force teaching their peers — surfaced more than once.

90 days

- Build (or formalize) your semantic layer. This is the architectural prerequisite for everything in 2027. It's also the unlock for governed self-service, conversational analytics, and any agent that has to compose answers across multiple sources.

- Define your human-in-the-loop policy. Where does the agent decide? Where does it recommend? Where does the SME approve? Write it down before you scale anything.

- Pick the two or three use cases worth re-architecting around. Not thirty. Not five. Two or three. The pilot graveyard exists because everyone tried to scale fifteen at once.

The Quote that Stuck with Me

A line worth taping to the wall, from the Day 2 keynote:

"The barrier to commercial transformation is not technology or data availability. It's leaders and organizations still anchored in traditional paradigms."— Merck SVP, Digital Human Health

If a tool deployment doesn't change the underlying workflow, the tool isn't the answer.

If your analytics team is buried in ad hoc requests

The shifts above all trace back to one structural problem: pharma analytics teams are buried in ad hoc requests, and the answers aren't reaching the people making decisions fast enough to matter.

That's the problem Tellius solves. If you're heading into the back half of 2026 trying to figure out how to free your analytics team from the request queue and unblock commercial teams to get trusted answers in the field, in the brand, and in the C-suite, let's talk →

Get release updates delivered straight to your inbox.

No spam—we hate it as much as you do!

%20(1).png)

Reuters Pharma USA 2026: Lessons from 70+ Sessions

Lessons from 70 sessions at Reuters Pharma USA 2026 show pharma shifting to AI-driven, data-connected models—prioritizing execution, real-time insights, and smarter decision-making.