5 Common Pitfalls to Avoid When Launching a Self-Service Analytics Program

Who among us wouldn’t absolutely love to have a magic wand that instantly turns all of your business users and data consumers into fully empowered self-serve whizzes?

Imagine users seamlessly analyzing their own data on the fly while data teams are finally free of the never-ending onslaught of mundane BI dashboard updates and can focus their attention on higher-value projects for the business. If that doesn’t sound like utopia, then I don’t know what is!

Yet in practice, this self-serve vision can be challenging to achieve. The reasons are varied and often context-specific (and the source of many LinkedIn comments debates).

In a previous post, we covered five best practices when launching a self-serve analytics vision across your organization. Now, we’ll delve into some common pitfalls we have seen—and how to avoid those pitfalls to maximize your odds of success (based on what we’ve learned).

Pitfall #1: Everything, every user, all at once

The pitfall:

Launching a new self-service analytics platform to a large group of business users and expecting full, 100% adoption across the entire user base (i.e., our utopia is instantly achieved!).

Unfortunately, the reality we see is that not everyone is going to pick up self-service right off the bat. Within any business unit, there is a wide spectrum of technical skill sets, data savviness, and perhaps most importantly, an open mindset to try out new technology that disrupts the status quo. There will more than likely be individuals who resist change or new ways of working—and that’s okay!

You may already be familiar with Rogers’ Diffusion of Innovation concept. The idea is that with any new innovation, there is a broad spectrum of how users will engage with the technology. “Innovators” and “Early Adopters” are eager to try something new, even if it comes with an initial learning curve or hiccups in the experience. “Late Majority” and “Laggards” are more risk-averse and tend to want to see others try out the new tech before taking the plunge themselves.

The pitfall is when we take too broad of a brush when determining our initial target audience. We launch our self-serve platform to all users all at once without having first built consensus or excitement among an Early Adopter cohort.

The result often manifests as wasted energy, trying to push self-service analytics onto users who are not yet ready. Even worse, some of your Late Majority and Lagging users may pour cold water on your efforts with skepticism or cynicism, raising other challenges that then cascade into the rest of the user base.

How to avoid:

During the implementation phase, launch an Early Adopter program by first identifying which business users or analysts are eager to adopt self-serve analytics. You can identify this cohort through conversations with business leaders or managers who can help recommend folks from their teams to pull in. Another method is to run a survey across your target user base to assess interest in being a part of the Early Adopter program.

After identifying this cohort, build consensus with them by launching a series of enablement sessions. Iterate with that group first, collect feedback, and refine your solution.

Key takeaway: Focus on first establishing an empowered, engaged group of Early Adopter champions. Then, shift attention toward scaling out self-service to the rest of the addressable user base, leveraging your Early Adopters as influencers along the way.

Pitfall #2: Success measures, shuck-shmesh shmeaures!

The pitfall:

Not setting success measures or objectives for your program before embarking on the execution of your vision.

For a self-service data program to be successful, analytics teams must define what success looks like long before a launch. This sounds obvious—however, I’ve seen customers misfire here in two critical ways:

- Not stepping back to define what measures or goals need to be achieved (and by when) in order to consider the self-serve initiative a success

- Not communicating this vision and success criteria to leadership and business stakeholders upfront prior to rollout

Ambiguous or undefined success criteria can translate into misaligned expectations, impatience, and eventual lack of support by leadership for the initiative.

How to avoid:

With any self-serve analytics effort, there is a break-even point when (1) the users adopting self-service and (2) the number of queries/analyses executed autonomously both add up to a business impact that exceeds the cost of implementing and maintaining the self-serve platform.

However, don’t get too hung up on isolating an exact break-even number. Rather, you want to have a directional sense of the size and scale of usage that would constitute a massive success, moderate success, or failure.

One of our large enterprise clients targets an active monthly usage of anywhere greater than 50% of their target audience. Another customer looks primarily at the volume of queries and data insights generated autonomously on a monthly basis. They track these numbers alongside their BI/data team backlogs to see if there is a measurable decrease in requests submitted.

Key takeaway: Identifying which success measure is right for your organization is often context-specific and dependent on your overarching goals. Identify measures that are most closely aligned to business value, and then set ambitious (yet attainable) targets that can be easily tracked once you’ve launched your solution.

Note: The financial value (e.g., cost-savings or revenue growth) attributed to executed analyses or use cases is also critical to identify and track in parallel with the impact realized from self-serve efficiencies.

Pitfall #3: Enablemiss

The pitfall:

Not investing enough time and effort into building out a robust enablement and communication strategy.

One of the most impressive aspects about the rise of ChatGPT was the almost instant ubiquity it achieved across both technical and non-technical users alike. This was driven in large part by its ease of use. Type in a prompt in a search bar and get results back instantly. No login required, no training—just a simple call-and-response.

While self-serve data analytics and insights platforms (like Tellius!) are moving rapidly toward this level of ease of use, there will always be a need for enablement, in some shape or form, to ensure users know what to expect of the platform and the best practices to engage with the technology. This is especially true of new technologies that your user base may not yet be familiar with yet.

I’ve seen first-time users struggle with the following aspects of self-serve analytics:

- Understanding which data sources are available to query—and almost more importantly, which data sources are NOT available for query

- Understanding the dimensions and measures available for query and the naming convention of these fields relative to the terminology used by the business

- Knowing how to structure a query or the terms to use to get the best resulting visualization

- Setting expectations around how to interpret results and iterate on their analyses quickly (e.g., add additional filters, modify the chart type, or change an axis)

- Customizing or reformatting a data visualization output and sharing the analysis with stakeholders or colleagues

Without proper enablement and timely communication, users can quickly become frustrated or impatient, or they may lose interest in the platform altogether, putting your self-serve vision at risk.

How to avoid:

Early in the platform development phase, work with your self-service platform vendor to hold an enablement planning workshop, covering:

- A deep-dive understanding of target users: This includes understanding your users’ personas/roles in the company, their self-serve objectives (can leverage a “Jobs To Be Done” framework), current pain points, current tech stack, and how many users need to be onboarded

- Change management communications: Detail how you will inform users of the new platform, training sessions and materials, follow-up resources, and post-launch communications

- Enablement timeline: Plan out the timeline for launch to each user cohort, send comms, deliver training sessions, hold office hours, etc.

- Resources and materials: Plan the supplementary resources required, including data dictionaries, Sharepoint or wiki pages, and self-service training sessions

- Live training sessions: Plan out the live enablement sessions with a high degree of interactivity and hands-on exercises

If you cover all of the bases above, your solution stands a higher chance of generating a better first impression with your user base and will provide positive momentum heading into the adoption phase.

Key takeaway: Often, enablement planning will take a backseat to more technical execution aspects. Make sure to prioritize enablement planning as early on in your initial onboarding phase as possible—ideally, at least 3-4 weeks before your targeted enablement launch date.

Pitfall #4: The Dreaded Post-Launch Plateau™

The pitfall:

Launching your self-service platform and then expecting usage to consistently build without regular actions and interventions.

We covered in the last pitfall the importance of enabling your users on a new technology. But enabling your users and then leaving them to their own devices is a recipe for a failed self-serve program.

Often what we see is that there is a ton of work and energy that goes into the initial launch and enablement of a new platform to end users. There’s then an understandable and natural inclination to pull off the gas after the last enablement session is complete. But this is precisely when the accelerator needs to be pressed even harder.

How to avoid:

Reframe the first month after initial user enablement as the most critical phase to the success of your self-serve vision.

We typically advise our customers to deliver on five main adoption pillars:

Pillar 1 – Continued Enablement: This often includes having follow-up enablement sessions and more advanced functionality trainings, holding regular office hours, and ensuring self-service resources are being leveraged and updated.

Pillar 2 – Change Management Comms: Send out regular communications (or nudge campaigns) to your user base that include helpful resources, tips and tricks, important announcements, product updates, and celebratory stories or wins. I recommend my clients target at least one reach-out per week for the first four weeks post-launch and then ramp back to a monthly communication or newsletter.

Pillar 3 – Collecting User Feedback: Conduct several follow-up interviews or focus groups with select users and run a two-week survey post launch to collect initial feedback and iterate quickly on any challenges faced.

Pillar 4 – Monitoring Adoption: Keep close tabs on who has been using the platform and—as importantly, if not more—who has not been using the platform.

Pillar 5 – Adoption Plays: This last pillar is a call to action to think outside the box on what can meaningfully improve the usage and satisfaction of your user base. This can take many different flavors, including gamified competitions, hackathons, usage-based prizes, in-person events, and so on. The key here is to get creative with your stakeholders and do something that will be memorable and generate excitement about the new platform.

Key takeaway: Delivering on just one action or pillar from above is likely not enough to build and sustain adoption. Your user base is likely a busy group and needs regular reminders, opportunities for enablement, and outlets for feedback. Things do eventually calm down and reach a steady state, but don’t let off the gas too soon. By this point, you’ve made it too far and invested too much in your vision to not cross the finish line!

Pitfall #5: A tree falls in the woods...

The pitfall:

Not identifying and evangelizing the success of your users and the business value achieved across the organization.

The timeless adage “If a tree falls in the woods, and no one is there, does it make a sound?” also applies well to self-serve data analytics initiatives.

As with any transformative digital initiative, organizations often successfully launch to end users, only to lose momentum or support from senior leaders when the business value achieved is not communicated effectively. Moreover, beyond quantifiable numbers, executive teams can be far removed from the stories and successes of end users.

How to avoid:

Simply put: Communicate the value achieved—loudly and often.

We can break value down into two categories: (1) quantitative and (2) qualitative.

Quantitative value: Going back to Pitfall #2 on success measures, if you have aligned your success measures with leadership and organizational goals upfront, then sharing your attainment of those measures becomes an easier process. Track those measures and provide status updates on attainment at 30-/60-/90-day milestones post-launch.

And what if the success measures lag behind your initial targets? Communicate the future initiatives or actions that will lead to improvements in your success measures and any shifts in the expected timeline for hitting your goals.

Qualitative value: As outlined in Pitfall 4, it is critical to gather success stories from your user base. These are often the trees that fall that no one is around to hear. If your user is able to pull a nugget and use that to make a decision or inform leadership faster than expected, that is gold! Work with your users to get a ballpark estimate of the value achieved by running this analysis or uncovering a particular insight. These success stories and quotes are an essential counterpart to your numbers that humanize the impact made.

Key takeaway: Once you collect your quantitative and qualitative assessments of value achieved, make sure your stakeholders hear it! This can take the form of executive readout presentations, regular newsletters, roadshows, webinars, and conference events.

Learn more about self-service analytics

Get release updates delivered straight to your inbox.

No spam—we hate it as much as you do!

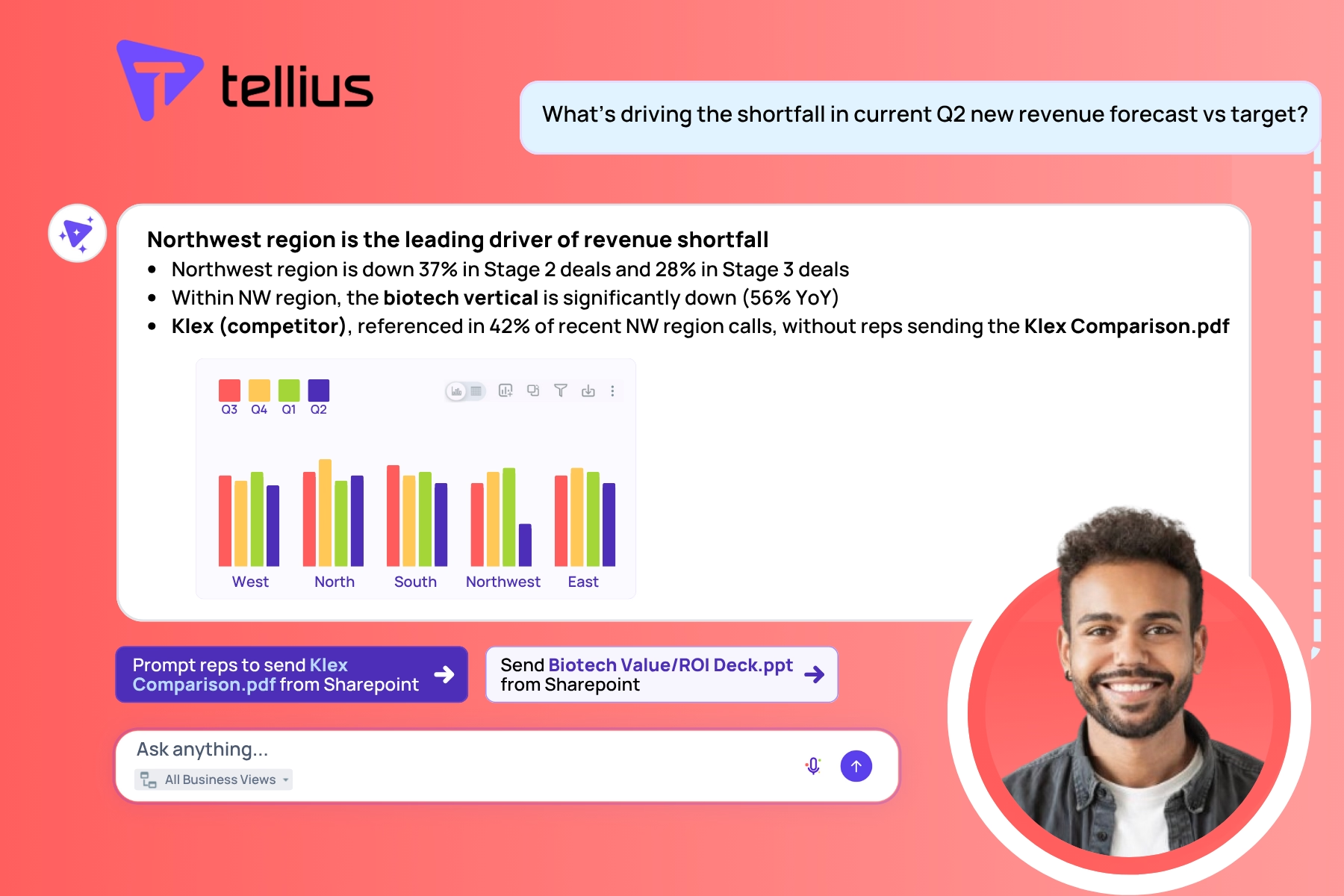

RevOps Intelligence Redefined: AI-Powered Agents Meet Unified Knowledge Layer

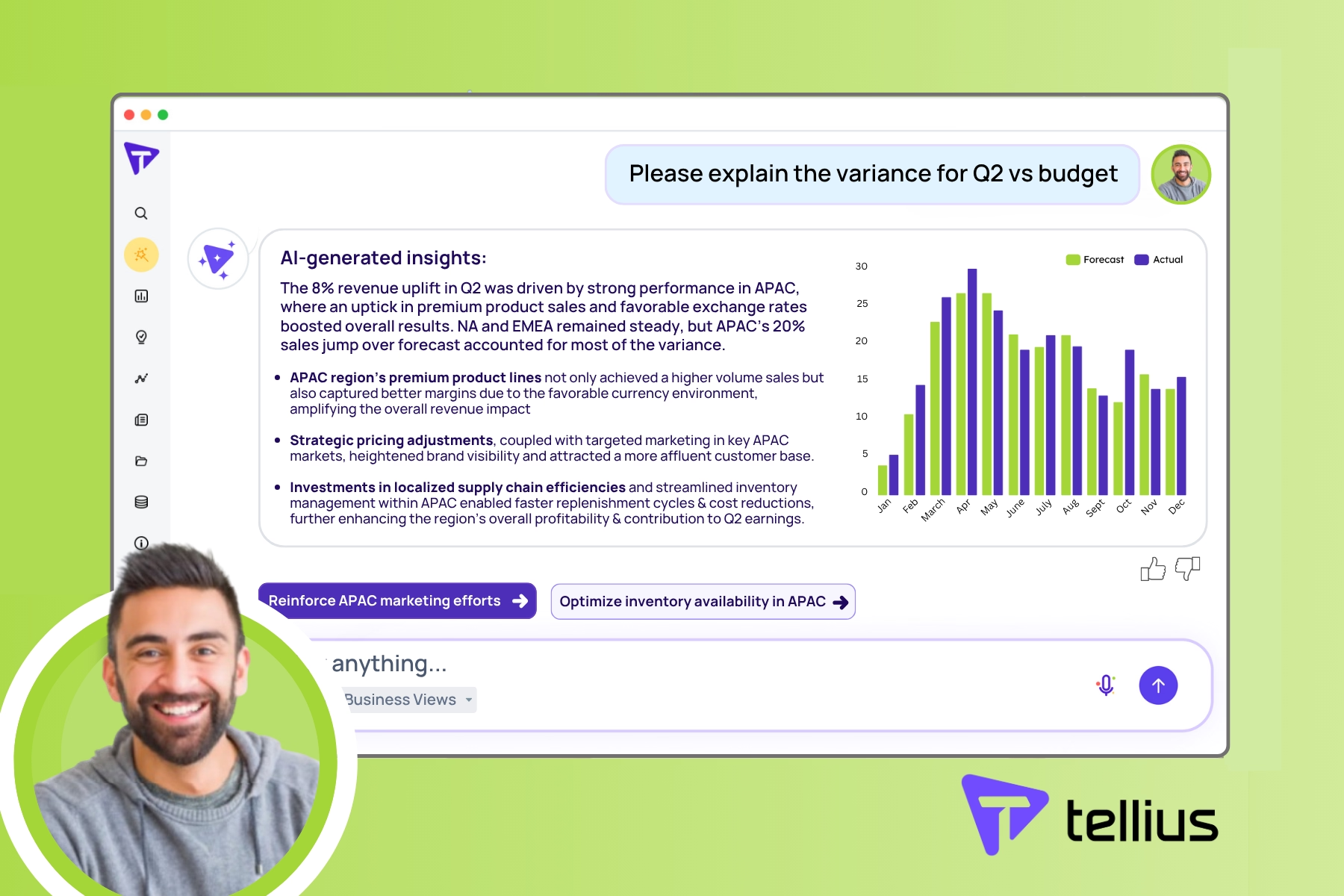

Learn how AI-powered variance analysis is transforming how organizations uncover and act upon financial insights.

How AI Variance Analysis Transforms FP&A

Learn how AI-powered variance analysis is transforming how organizations uncover and act upon financial insights.

6 Key Takeaways from the 2023 Gartner Data & Analytics Summit

This year’s Gartner Data & Analytics Summit in Orlando was a jam-packed three days of 220+ sessions, keynotes, and networking opportunities attended by over 4,000 data and analytics leaders and industry experts.